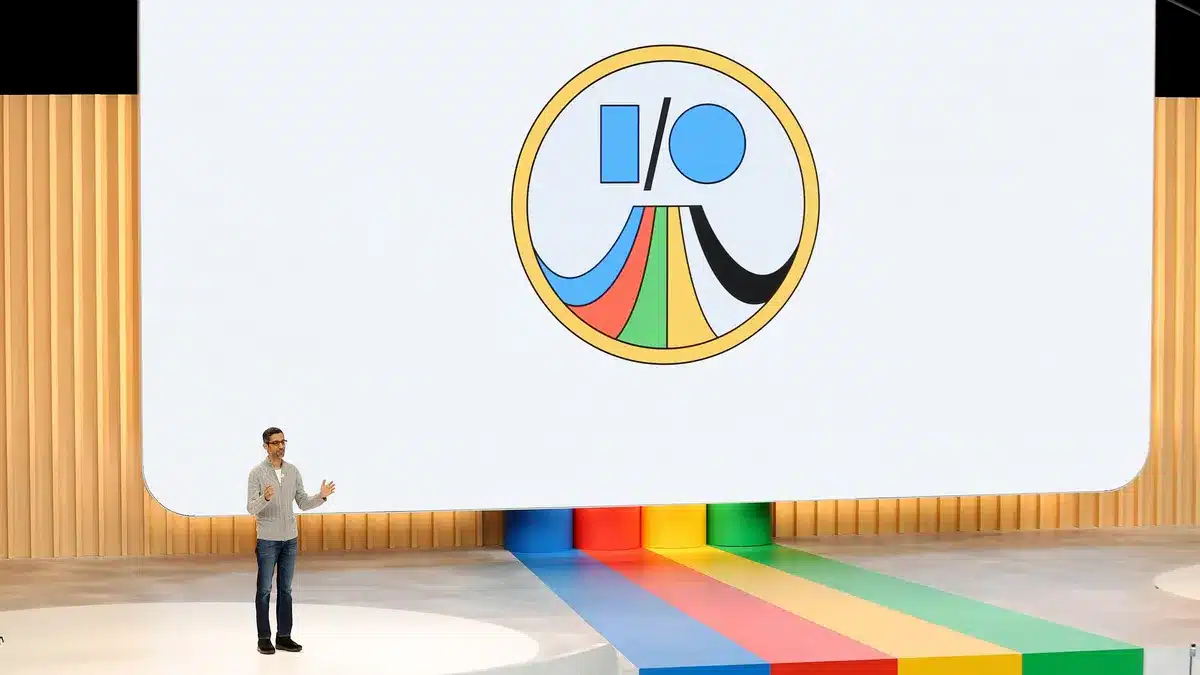

Google I/O 2025 has officially commenced, and it’s shaping up to be one of the company’s most significant events, with artificial intelligence (AI) at the forefront. Held at the Shoreline Amphitheater in Mountain View, California, the conference began on May 20 at 10 a.m. PT (10:30 p.m. IST) and will continue through May 21. For those unable to attend in person, the event is being livestreamed on Google’s official I/O website and YouTube channel, complete with American Sign Language interpretation for accessibility.

Gemini AI Takes Center Stage

At the heart of this year’s announcements is Gemini, Google’s family of AI models. The company introduced several enhancements, including “Agent Mode,” which allows users to assign complex tasks that the AI can complete independently. For instance, users can instruct Gemini to find apartment listings with specific filters across various platforms.

Additionally, Google unveiled “Project Mariner,” an AI tool capable of autonomously searching the web and supporting up to 10 simultaneous tasks. A notable feature, “Teach and Repeat,” enables users to demonstrate a task once, allowing the AI to learn and replicate similar tasks in the future.

For those seeking early and comprehensive access to Google’s latest AI tools, the company announced “Google AI Ultra,” a premium subscription service priced at $250 per month. This service is designed for users who want to stay at the cutting edge of AI advancements.

Android 16: A Focus on Personalization and Performance

While AI dominated the keynote, Google also provided insights into Android 16. The upcoming operating system introduces the “Material 3 Expressive” design, featuring increased use of animation, colors, and blur for a more dynamic user interface. Notably, Android 16 expands the “Linux Terminal” feature, allowing users to run GNU applications within a virtual machine on their devices, bringing Android closer to a desktop-like experience.

Other enhancements include real-time updates on the lock screen, improved support for foldable and tri-fold devices, and a range of new accessibility features. These updates aim to provide users with a more personalized and efficient experience across various devices.

Project Astra and the Future of AI Interaction

Google’s DeepMind division showcased “Project Astra,” a multimodal AI system capable of understanding and interacting with the world in real-time. Demonstrations highlighted Astra’s ability to process visual and auditory information simultaneously, paving the way for more intuitive AI interactions. This technology is expected to play a significant role in the development of AI-powered smart glasses and other extended reality (XR) devices.

Enhanced AI Integration Across Google Services

Beyond standalone AI tools, Google announced deeper AI integration across its suite of services. Gmail’s smart replies will now utilize AI to pull context from both Gmail and Google Drive, allowing responses to better reflect user tone and style. This feature, powered by Gemini, will adjust formality based on the recipient and is set to launch in alpha through Google Labs in July.

In Google Workspace, new features include an “inbox cleanup” tool, AI-assisted meeting scheduling, speech translation in Google Meet, AI avatars in Google Vids, and document-aware writing assistance in Google Docs. These enhancements aim to streamline workflows and improve productivity for users across various platforms.

How to Watch Google I/O 2025

For those interested in tuning in, the main keynote and subsequent sessions are being livestreamed on Google’s official I/O website and YouTube channel. The developer keynote, focusing on technical deep dives, is scheduled for 1:30 p.m. PT on May 20. Viewers can expect a comprehensive look at Google’s latest innovations and future plans across AI, Android, and more.