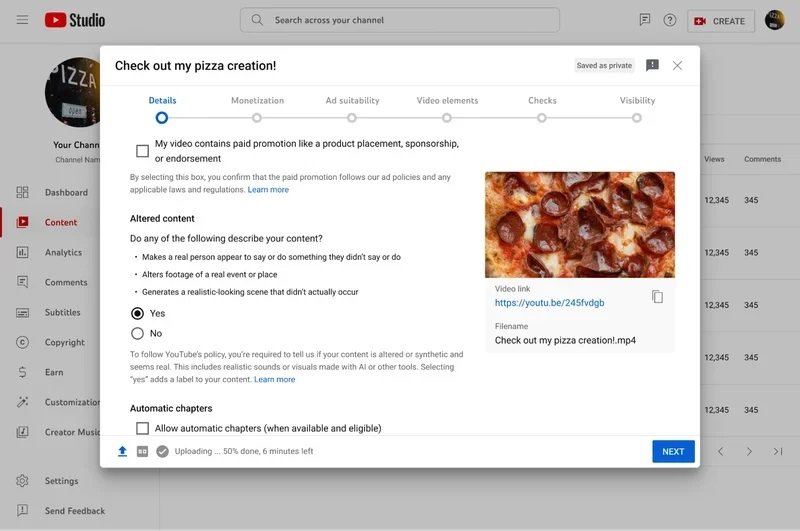

In a significant update to its platform, YouTube has announced a new requirement for content creators using its Creator Studio: the need to disclose when their videos contain altered or synthetic media created with generative AI that could be mistaken for reality. This initiative aims to boost transparency and foster trust between creators and viewers, acknowledging the growing concern over the authenticity of online content.

Key Highlights:

- Disclosure Requirement: Creators must now label content that utilizes realistic AI-generated imagery or sounds, ensuring viewers are aware of what is real and what is synthetic.

- Focus on Realism: The rule targets content that mimics real people, places, events, or scenarios, where the difference between synthetic and real could be indistinguishable to the average viewer.

- Exceptions: Content clearly marked as unrealistic, such as animations or uses of special effects, does not require disclosure.

- Visibility of Labels: Disclosures will be visible as labels either in the video’s expanded description or directly on the video player for heightened visibility.

- Commitment to Transparency: This move is part of YouTube’s broader efforts to increase transparency and authenticity across digital content, in collaboration with the Coalition for Content Provenance and Authenticity (C2PA).

Detailed Insights

YouTube’s policy is a response to the evolving landscape of content creation, where generative AI tools have become increasingly sophisticated, allowing creators to generate realistic-looking content. By implementing these disclosure requirements, YouTube addresses viewer concerns head-on, promoting an informed viewing experience.

The platform outlines specific instances necessitating disclosure, such as videos featuring digitally altered faces or voices that closely mimic real individuals, or manipulated footage of real-world events. The aim is to prevent any potential confusion or misinformation stemming from these creations.

The platform outlines specific instances necessitating disclosure, such as videos featuring digitally altered faces or voices that closely mimic real individuals, or manipulated footage of real-world events. The aim is to prevent any potential confusion or misinformation stemming from these creations.

Recognizing the diverse applications of AI in content creation, YouTube clarifies that not all uses of generative AI necessitate disclosure. For example, AI-assisted scriptwriting or the generation of creative ideas remains outside the scope of this policy, as do clearly fictional or heavily stylized pieces.

Also Read: YouTube Introduces AI-Generated Content Labeling Tool

YouTube also hints at possible enforcement actions for those who consistently ignore these disclosure norms, underscoring the importance of adherence. Moreover, the platform is working on updating its privacy process to allow individuals to request the removal of AI-generated content that impersonates them without consent.

This update reflects YouTube’s ongoing commitment to enhancing digital content integrity, not just within its own platform but across the industry. As part of the C2PA, YouTube continues to work with other leaders in the field to develop standards and practices that ensure content authenticity.

As generative AI technologies grow more embedded in the creative process, YouTube’s new disclosure requirements represent a pivotal step in balancing innovation with ethical considerations. Creators remain at the heart of the platform’s ecosystem, and this policy encourages them to navigate the use of AI tools responsibly, ensuring viewers can trust the content they consume.