The world of mixed reality inches closer to everyday life, and Google is clearly placing its bets. While the company hasn’t held back from discussing its ambitions in this space, a closer look at where and how they are sharing their progress reveals a strategic push, notably involving the Android platform tailored for extended reality – Android XR. Reports and public statements from various forums show Google is actively working on and demonstrating the foundations of its XR future, signaling that its vast ecosystem could soon extend into spatial computing.

For years, rumors and reports have circled around Google’s efforts in building hardware for augmented and virtual reality. Projects like the now-shelved Daydream offered early steps into VR, but the focus has visibly shifted towards more advanced, integrated systems capable of both AR and VR experiences – what the industry now often calls “XR.” This shift isn’t just about building glasses; it’s fundamentally about creating the software backbone that will power them. That backbone, Google has made clear, is Android.

Why Android for XR? The logic seems straightforward. Android powers billions of smartphones, tablets, watches, TVs, and cars around the globe. Its open nature has fostered an unparalleled developer ecosystem and a massive library of applications. By adapting this familiar operating system for mixed reality devices, Google aims to leverage this existing strength. Imagine accessing your favorite Android apps – scaled and adapted – within a 3D environment, controlled by gestures or gaze. This is the promise of Android XR.

Google has been discussing its work on Android XR in various public and semi-public settings. While a specific, singular “TED Talk” event solely dedicated to a full demo of finished consumer glasses running Android XR might not be the most prominent recent headline, Google executives and engineers have certainly taken to stages and shared insights into their progress. Developer conferences, industry summits, and official blog posts serve as key venues where Google outlines its vision and provides glimpses into the technology. These platforms are where Google often chooses to share strategic developments and rally developer support, a critical step for any new computing platform.

The demonstrations, as described in reports from those who have seen them, often focus on the core capabilities enabled by Android XR. This isn’t just showing off sleek hardware (though prototypes are part of the picture); it’s about proving the software can handle the complex demands of spatial computing. We’re talking about seamless tracking of the user’s head and hands, rendering detailed 3D environments, anchoring virtual objects realistically within the physical world, and managing the power and performance constraints of a wearable device.

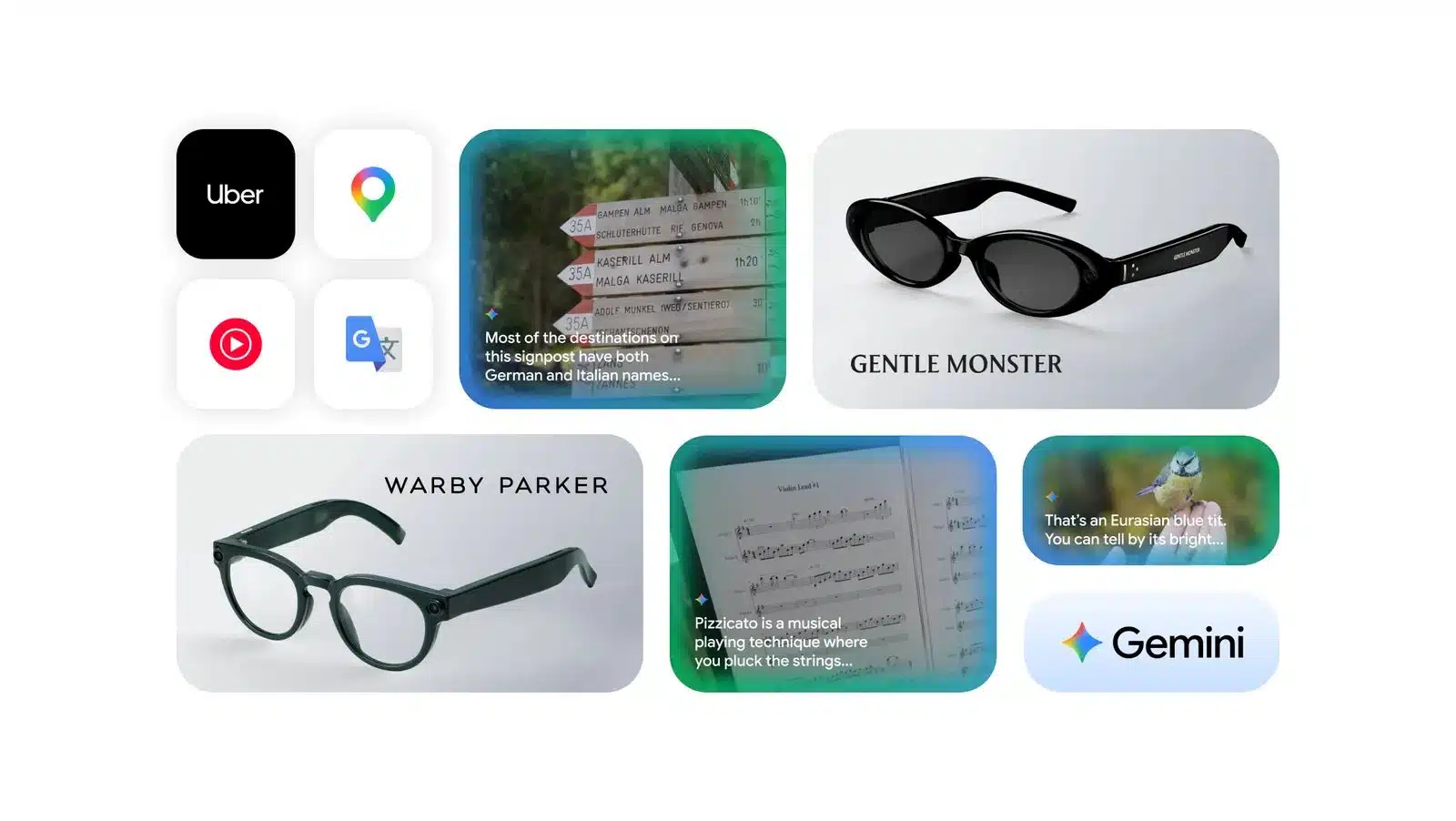

One key aspect often highlighted is the potential for a familiar user interface. Android XR aims to bring the intuitiveness and accessibility of Android to mixed reality. This could mean adapting elements like notifications, multitasking, and even the basic app launcher into a spatial context. For developers, building on Android XR potentially means they can port or adapt their existing Android applications and leverage familiar tools and programming languages, significantly lowering the barrier to entry compared to starting from scratch on a completely new platform.

Imagine wearing a pair of lightweight glasses that look relatively normal. As you walk down the street, notifications from your messaging app might appear subtly overlaid in your peripheral vision. You could get turn-by-turn directions anchored to the actual road ahead. Sitting at your desk, virtual monitors could appear around you, allowing you to multitask across multiple screens that only you can see. These aren’t far-fetched futuristic concepts; they are the kinds of experiences Google is exploring and building towards with Android XR.

Public reports often touch upon Google’s work on reference designs or prototype hardware. While Google itself might not manufacture the final consumer device under its own brand, showing off capable hardware running Android XR demonstrates the platform’s potential and provides a blueprint for other manufacturers. This aligns with Google’s historical approach with Android on smartphones, where they provided the software and reference designs, enabling a wide array of hardware partners to build diverse devices. This strategy could accelerate the adoption of Android XR by the broader electronics industry.

The implications of a powerful, open Android platform for XR are significant. For consumers, it could mean more affordable and varied XR devices, similar to how Android spurred competition and choice in the smartphone market. For developers, it opens up a massive potential user base and provides familiar tools to build next-generation spatial applications – everything from productivity tools and educational experiences to immersive games and social interactions.

Google’s continued public engagement around Android XR, whether through conference presentations, developer previews, or glimpses of prototype hardware, underscores their long-term commitment to this space. They are not just experimenting; they are building a foundational platform designed to power the next wave of computing devices.

What we see and hear from Google points towards a future where mixed reality isn’t a niche technology but an integrated part of our digital lives, powered by an ecosystem we already know and use every day. The journey is long, and challenges remain – from shrinking the hardware to making the experiences truly compelling and comfortable – but Google’s strategic focus on Android XR suggests they believe they hold a key piece of the puzzle for unlocking that future. Their public discussions and demonstrations aren’t just technical showcases; they are invitations to developers and partners to join them in building the spatial internet. Keep watching.